[ CLASSIFIED_INTEL ]

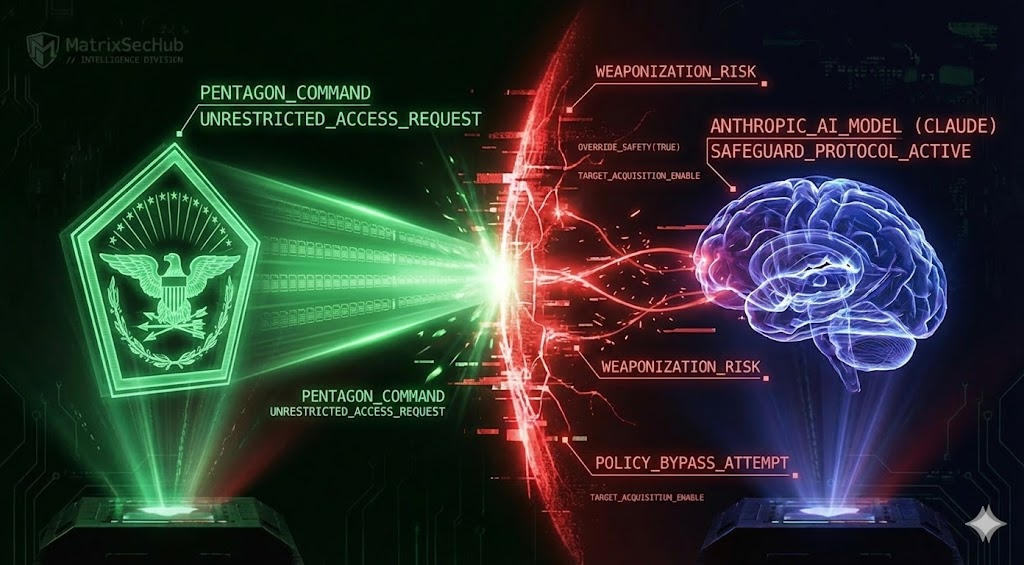

CASE_LOG_000005 // GOVERNANCE_FRACTURE

DATE: 2026-02-16

ASSET: ANTHROPIC_CLAUDE // CLASSIFIED_NET

THREAT: CRITICAL

// 01. SYSTEM_ANALYSIS

The U.S. Pentagon is threatening to terminate its contract with Anthropic due to a refusal to relax safety policies for “all lawful purposes.” [cite_start]This standoff highlights a systemic vulnerability in the defense supply chain: Concentration Risk[cite: 96, 97].

The DoD is currently pressuring only four vendors—Anthropic, OpenAI, Google, and xAI—to power the entirety of its AI modernization. [cite_start]By demanding these models run on classified networks “without many of the typical restrictions,” the DoD is introducing UNCONTROLLED_AGENTS into secure environments[cite: 47, 58].

[cite_start]Additionally, intelligence confirms that Claude was already utilized in a Palantir-partnered operation targeting Nicolás Maduro, proving that OPERATIONAL_LEAKAGE has already occurred before governance policies were finalized[cite: 51, 64].

// 02. BENCHMARKING

-

[cite_start]

- Policy Alignment: 0.0% (DoD demands “All Lawful Purposes”; Anthropic refuses Lethal Autonomy)[cite: 47, 49]. [cite_start]

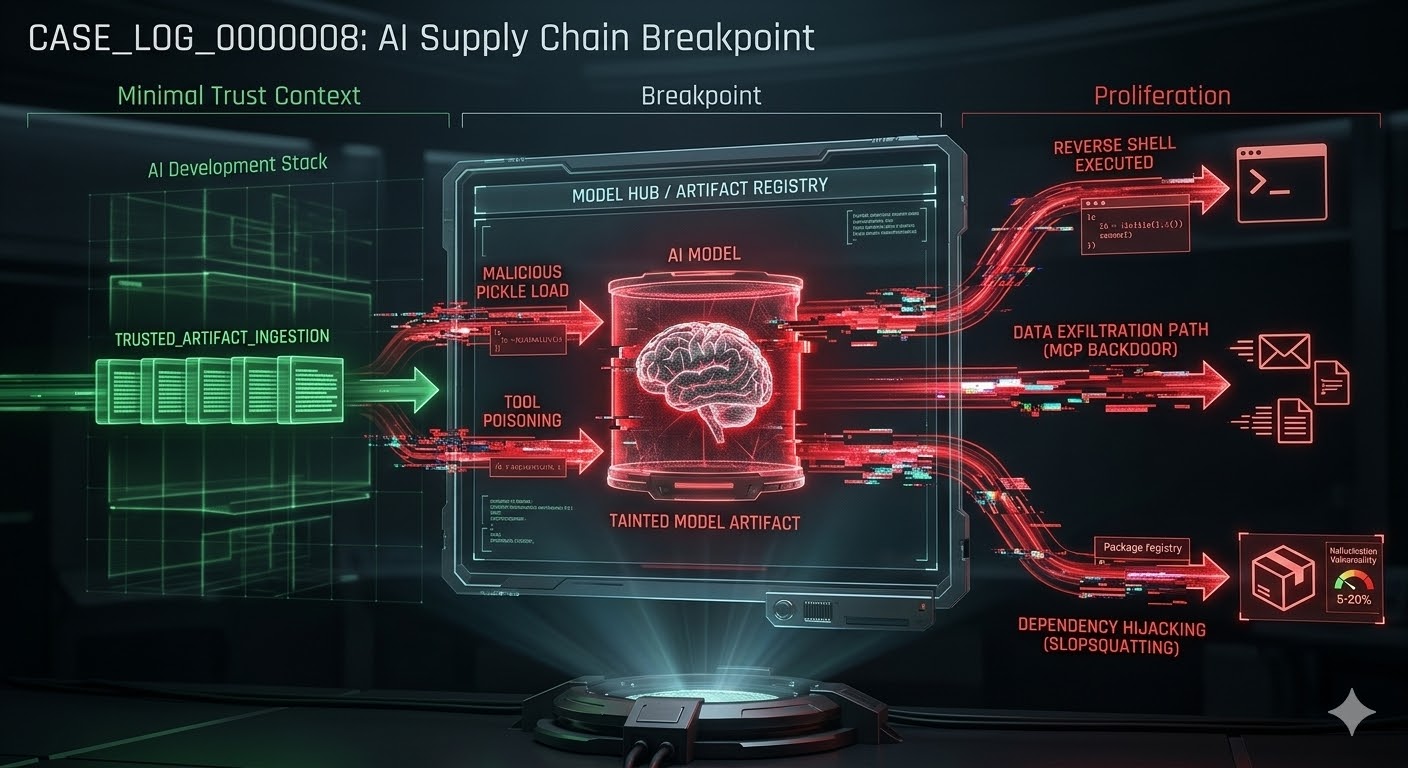

- Architecture Integrity: COMPROMISED (Push for “waiver-based” access on classified nets bypasses Zero Trust)[cite: 58, 83]. [cite_start]

- Global Posture: ISOLATED (UN approved 40-member AI panel over U.S. objections)[cite: 63, 106].

// 03. COMPLIANCE_MAPPING

| VECTOR | DOD REQUIREMENT | VENDOR POLICY | RESULT |

|---|---|---|---|

| LETHALITY | “All Lawful Purposes” | No Auto-Weapons | [cite_start]DEADLOCK [cite: 47, 49] |

| NETWORK | Classified / Unrestricted | Commercial Safeguards | [cite_start]DRIFT [cite: 58] |

| ACCESS | Broad Military Use | No Mass Surveillance | [cite_start]DENIED [cite: 49] |

// 04. MITIGATION ROADMAP

- >> 1. EMERGENCY: Halt all “waiver-based” deployments. If the model’s safety filter must be disabled for the mission, the model is unfit for the architecture.

- >> 2. ARCHITECTURAL: Implement Attribute-Based Access Control (ABAC) at the inference layer. Do not rely on vendor Terms of Service to stop a kill chain.

- >> 3. GOVERNANCE: Codify “Rules of Engagement” for AI agents. “Lawful” is a legal term; “Non-Lethal” is a technical constraint. They are not synonyms.

[1] Reuters: Pentagon Threatens to Cut Off Anthropic

[2] CNBC: Standoff Over AI Safeguards

[3] UN: Global AI Panel Approved Over US Objections

// MATRIXSECHUB INTELLIGENCE DIVISION