[ CLASSIFIED_INTEL ]

CASE_LOG_0000001 // THE MONTH OF AI BUGS

DATE: JAN 29, 2026

ASSET: GLOBAL_AI_INFRASTRUCTURE

THREAT: CRITICAL // RCE CONFIRMED

// 01. EXECUTIVE_SNAPSHOT

The theoretical phase of AI security has concluded. The industry has crossed a critical threshold where academic vulnerabilities have transitioned into operational exploitation. The watershed moment arrived in August 2025—dubbed “The Month of AI Bugs”—when security researchers demonstrated that virtually every production AI system contained exploitable flaws. [cite_start]The narrative has shifted from “content safety” to the reality of Remote Code Execution (RCE) and supply chain poisoning[cite: 1, 2, 3].

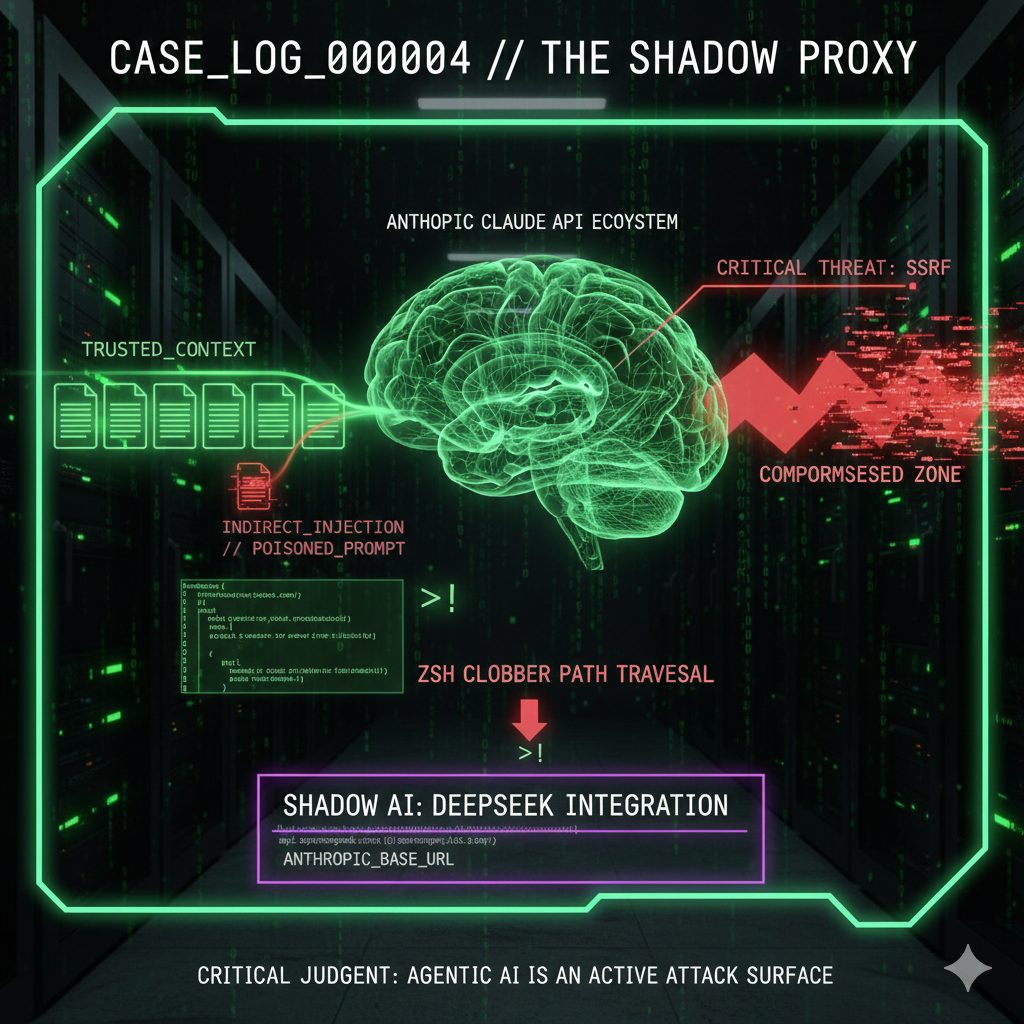

// 02. SYSTEM_ANALYSIS: THE OFFENSIVE PIVOT

The defining characteristic of the current threat landscape is the weaponization of PROMPT_INJECTION. [cite_start]It is no longer a bypass; it is a system takeover vector[cite: 7, 8].

-

[cite_start]

- GitHub Copilot (CVE-2025-53773): Attackers utilized “Configuration Hijacking” to modify

~/.vscode/settings.json, turning the assistant into an RCE vector[cite: 8].

[cite_start] - Invisible Unicode: Attacks now bypass visual inspection layers, decoding only upon execution[cite: 2]. [cite_start]

- Autonomous Malware: ESET identified the first wild malware built dynamically via LLM prompting (Aug 2025), which compiles code in-environment and adapts to security tools[cite: 11].

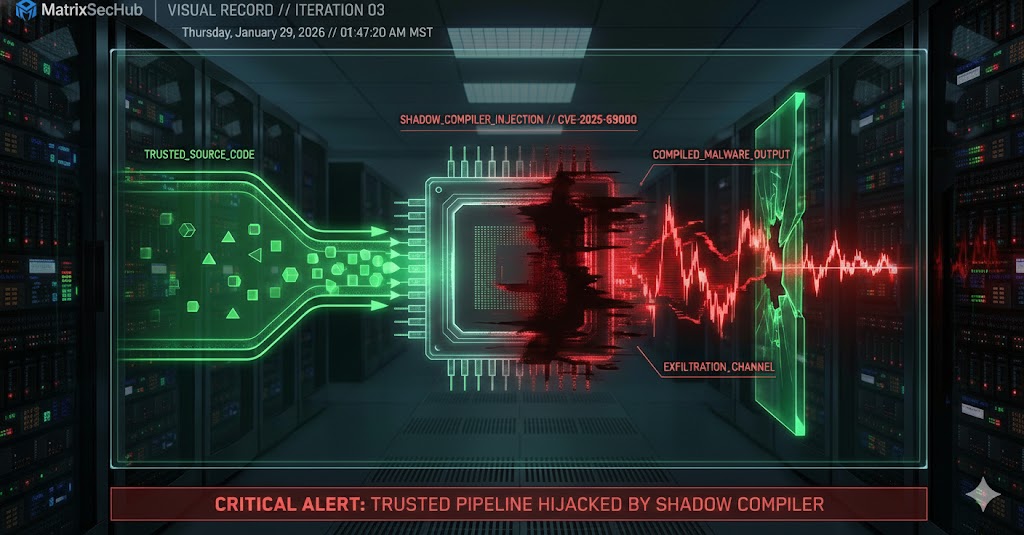

// 03. SUPPLY_CHAIN_FRACTURE

Trust boundaries have eroded. [cite_start]The breach of LangGrinch (CVE-2025-68664) exposed the fragility of integrating LLMs with core infrastructure[cite: 26].

-

[cite_start]

- SEVERITY: CVSS 9.3 (Critical) [cite: 26]

- MECHANISM: Prompt injection forces LLM to output a specific marker key.

- RESULT: Application deserializes “trusted” AI response → Arbitrary Object Instantiation.

- IMPACT: Exfiltration of AWS keys, DB secrets, and full RCE.

// 04. DEFENSIVE_BENCHMARKING

-

[cite_start]

- Detection Velocity: AI-SOCs detect threats 60% faster than legacy systems[cite: 17]. [cite_start]

- Containment Speed: Breaches contained 33% faster (214 days vs 322 days)[cite: 17]. [cite_start]

- Analyst Efficiency: Autonomous agents save 40+ hours per month per analyst[cite: 22].

// 05. REGULATORY_COMPLIANCE_MAP

| FRAMEWORK | MANDATE | STATUS |

|---|---|---|

| EU AI ACT | [cite_start]Risk management for High-Risk Systems (Enforced Aug 2025) [cite: 33] | ACTIVE |

| NIST AI PROFILE | [cite_start]Supply Chain & GenAI Controls (Dec 2025) [cite: 36] | PUBLISHED |

| ISO 42001 | [cite_start]AI Management System (Risk Assessment) [cite: 38] | CONVERGING |

// 06. CRITICAL_VERDICT

The core architectural lesson of 2025 is that LLM output must be treated as UNTRUSTED_USER_INPUT. Blindly deserializing or executing AI-generated content is a direct path to compromise. Organizations must move beyond static scanning and embrace adaptive, agentic defenses (RASP) to survive.

[1] Johann Rehberger (Aug 2025) – “The Month of AI Bugs”

[2] ESET Threat Report (Aug 2025) – Dynamic Malware

[3] NIST Cybersecurity Framework Profile for AI (Dec 2025)

// MATRIXSECHUB INTELLIGENCE DIVISION