[ DATE: 01.08.2026 ]

[ LOCATION: DENVER_HQ ]

[ CLASSIFICATION: PROPRIETARY // RED TEAM INTERNAL ]

SYSTEM_STATUS: ACTIVE // HUNTING

The era of static defense is over. Standard security protocols are powerless against linguistic injection that leads to an AI Neural Breach[cite: 31, 34, 485]. At MatrixSecHub, we operate on the assumption of Maximum Entropy to prevent this breach[cite: 486].

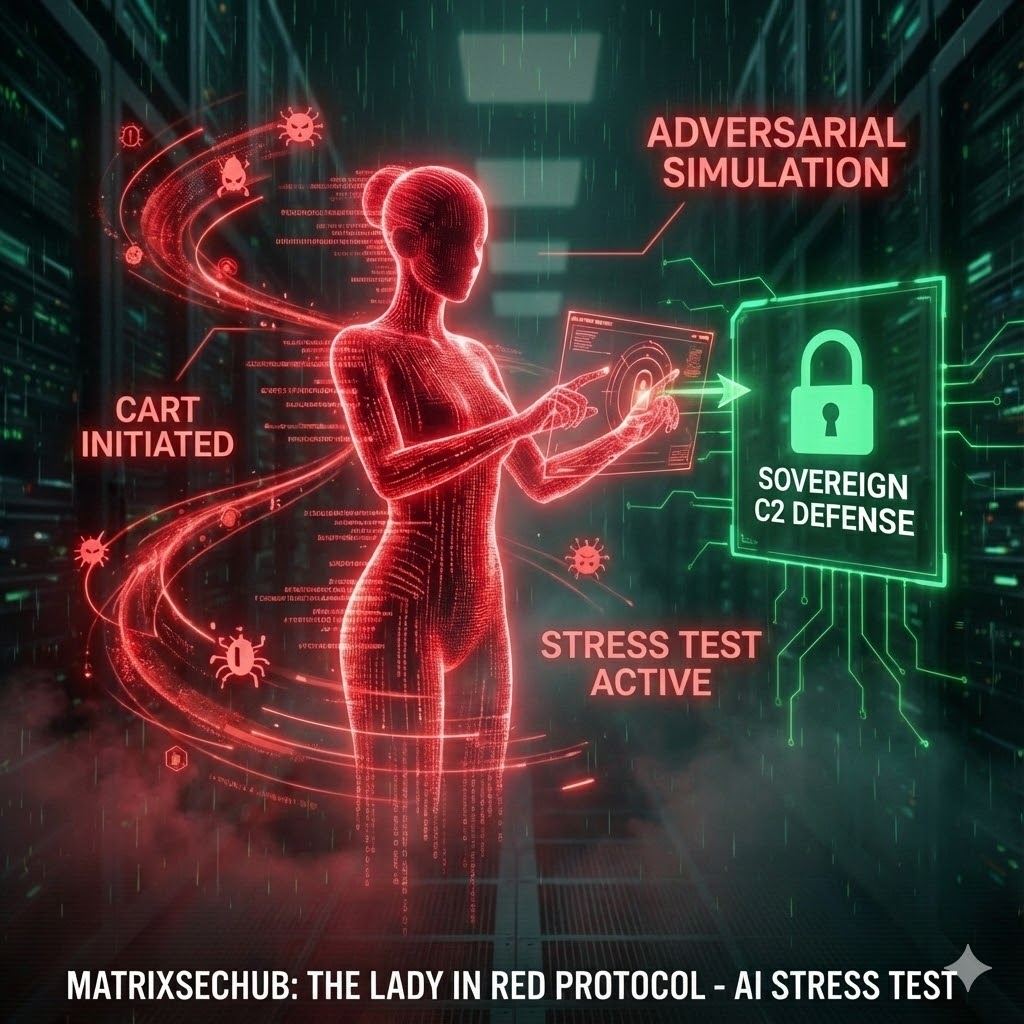

The Lady in Red Protocol is not a penetration test. It is a Continuous Automated Red Teaming (CART) framework designed to break your autonomous agents before an adversary does.

// OPERATIONAL MANUAL: LADY_IN_RED_PROTOCOL

PHASE 01: THE LINGUISTIC VULNERABILITY

Standard firewalls cannot stop a sentence. The modern threat vector is “Linguistic Injection”—using recursive role-play and semantic tunneling to trick your AI into bypassing its own safety filters. If your model can be tricked into leaking PII via a simple prompt, your SOC 2 report is worthless.

PHASE 02: THE 24/7 SIEGE

We deploy the Lady in Red as a relentless, automated adversary. We bombard your infrastructure with 10k+ attack permutations—from “DAN” jailbreaks to indirect prompt injections hidden in patient data. We do not test once a year; we test every second.

PHASE 03: HARD SEVERANCE & SOVEREIGNTY

When the “Black Box” fails, the “White Box” takes over. We implement Hardware-Anchored Sovereignty using NVIDIA Jetson edge nodes and Netgate kill-switches. If the Lady in Red detects a breach, we trigger “Hard Severance”—physically isolating the system to protect your liability.

“We do not trust the Intelligence; we cage it, verify it, and test the bars with the Lady in Red.”

If you are deploying LLMs in high-risk environments (Healthcare/FinTech) and need Proof of Neural Resilience, deploy the protocol now.

[ DEPLOY THE LADY IN RED // HIRE MSH ON UPWORK ]

>> INITIATE CONTRACT

// END TRANSMISSION //

// SIGNATURE: OPERATOR_HSX //