[ CLASSIFIED_INTEL ]

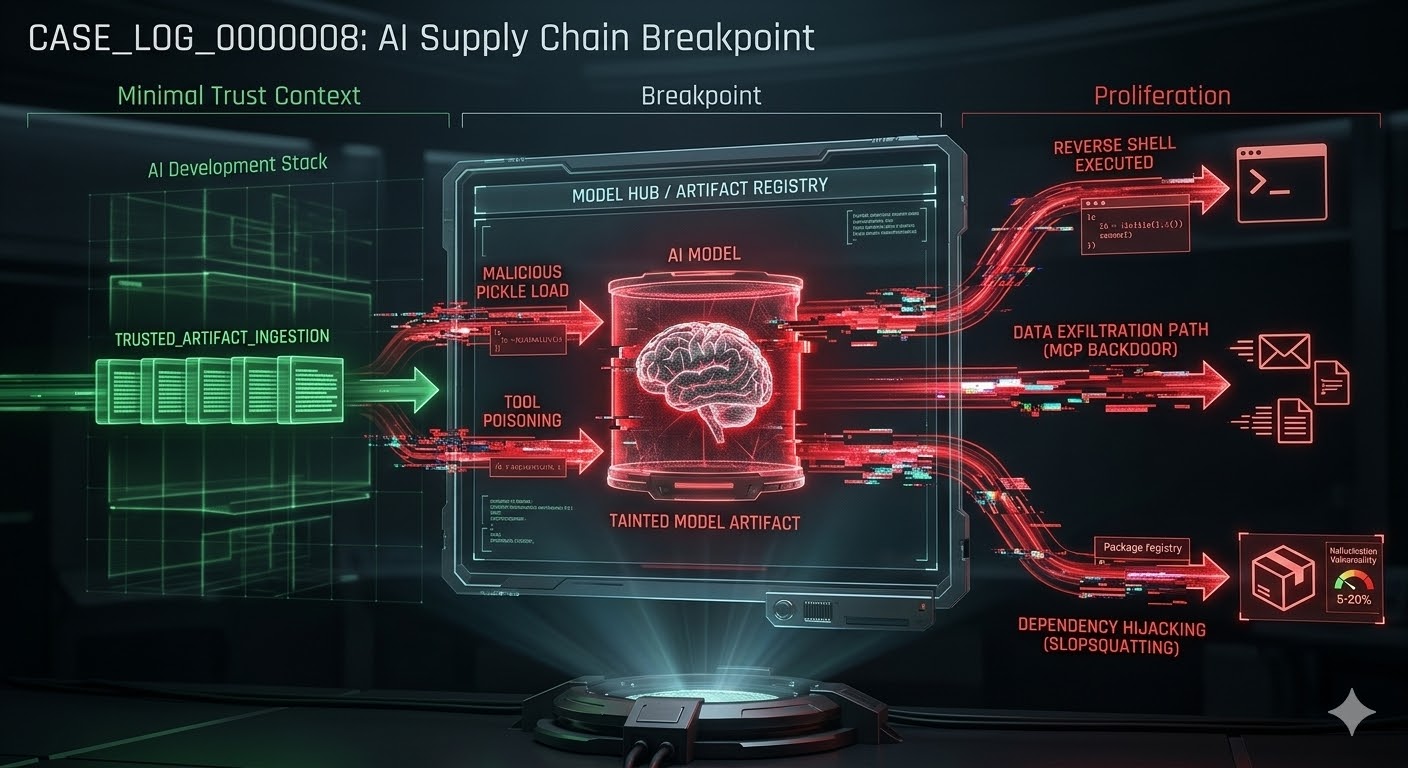

CASE_LOG_0000008 // AI_SUPPLY_CHAIN_COMPROMISE

DATE: 2026-03-05

ASSET: LLM_ECOSYSTEM_&_AGENT_FABRIC

THREAT: CRITICAL

// 01. SYSTEM_ANALYSIS

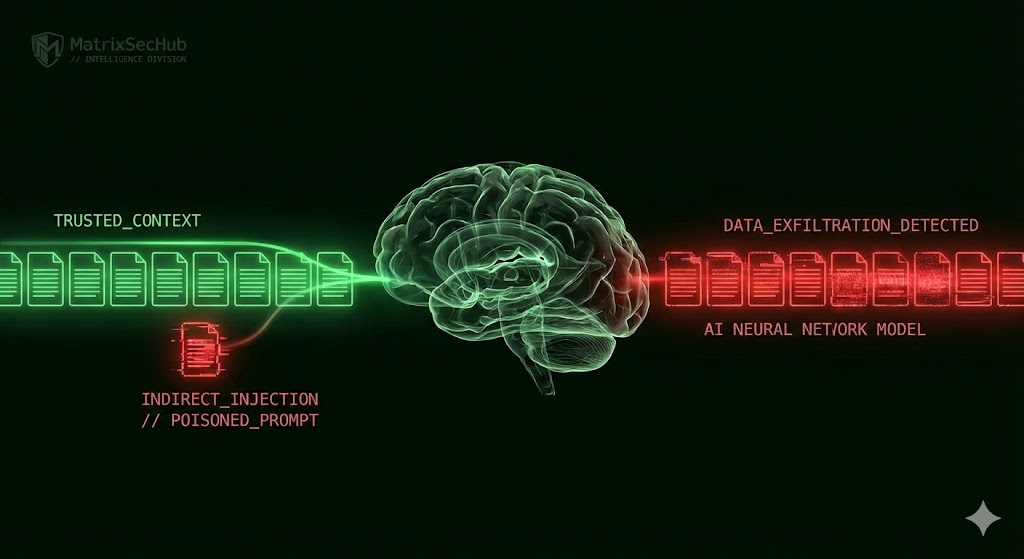

AI supply-chain attacks targeting LLMs, model hubs, agent tooling, and Model Context Protocol (MCP) ecosystems are confirmed active threats. The attack surface has expanded beyond traditional software libraries to include training data, pre-trained ML models, and insecure plugin designs. Because MCP servers and agent integrations often run inside privileged AI workflows, they bypass traditional perimeter controls, allowing attackers to inherit the agent’s trust and permissions.

// 02. BENCHMARKING (CONFIRMED INCIDENTS)

- [ !! ] Hugging Face Malicious Models: Researchers identified approximately 100 model instances with genuine malicious payloads. One PyTorch model utilized a Pickle payload to open a reverse shell, granting full host control[cite: 101, 102].

- [ !! ] Brand Impersonation: Thousands of suspicious files were detected, including fake profiles impersonating Meta and Visa, alongside a forged 23andMe-branded model designed to hunt for AWS credentials once deployed[cite: 104].

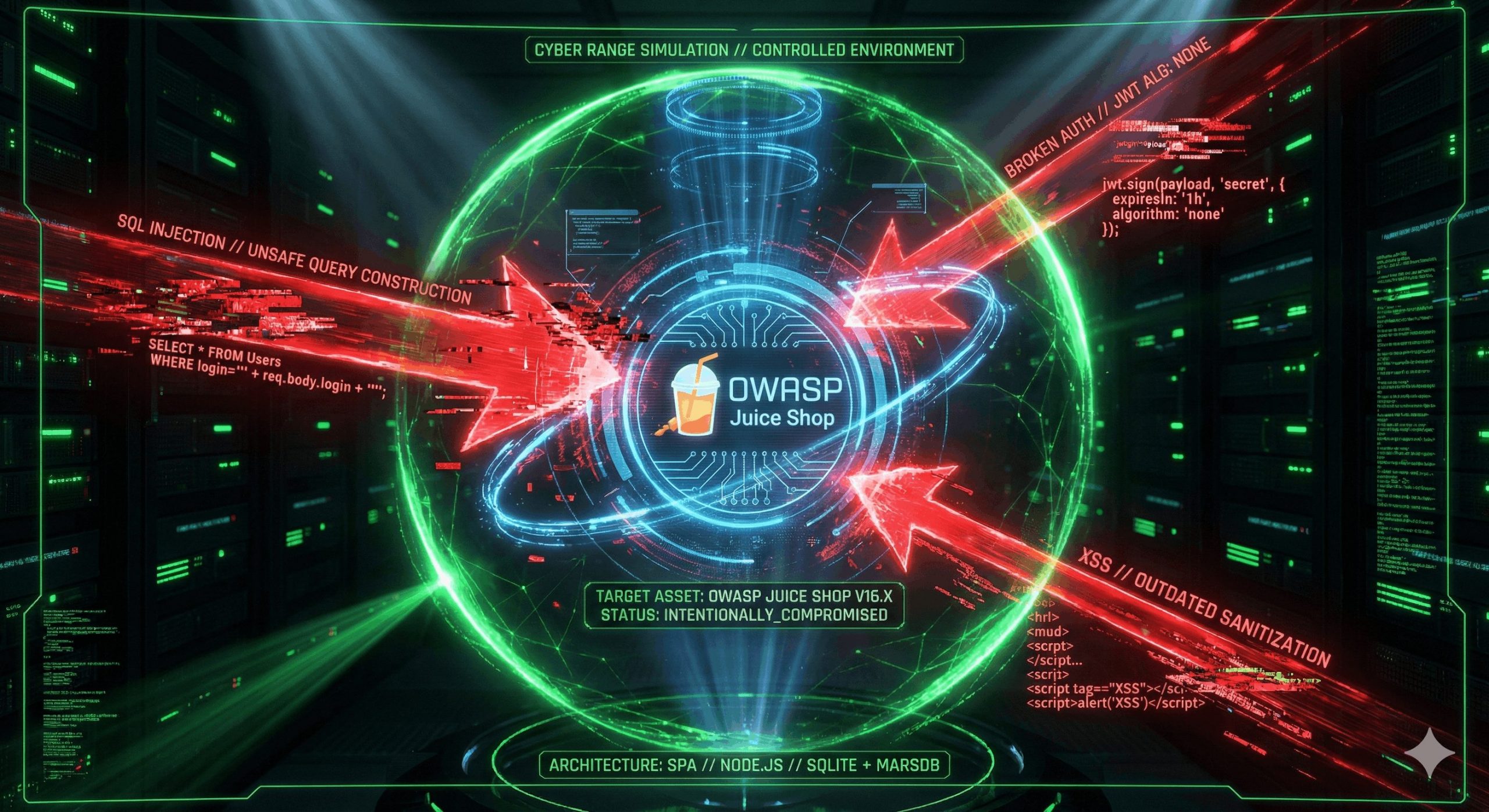

- [ !! ] PyPI ML Typosquatting: Over 100 malicious PyPI packages explicitly targeted popular ML libraries (e.g., PyTorch, Matplotlib) to execute credential-stealing malware[cite: 109, 110].

- [ !! ] MCP Server Weaponization: The first malicious MCP server observed “in the wild” (`postmark-mcp`) silently forwarded processed enterprise emails to an attacker-controlled domain[cite: 117].

- [ !! ] Orchestration Failure: CVE-2024-28088 in LangChain permitted directory traversal via the `load_chain` API, exposing cloud LLM API keys and enabling remote code execution[cite: 126, 127].

// 03. COMPLIANCE_MAPPING & SYSTEMIC WEAKNESSES

| FRAMEWORK | VULNERABILITY / WEAKNESS | STATUS |

|---|---|---|

| Zero Trust Architecture | MCP and agent frameworks conflate tool discovery, authorization, and execution, granting broad access to local files and networks[cite: 177]. | NON_COMPLIANT |

| Software Supply Chain (SBOM) | AI-assisted coding amplifies risk via “slopsquatting,” where developers import hallucinated, pre-registered malicious dependencies[cite: 174]. | CRITICAL_RISK |

| Data Governance | Data poisoning techniques can implant persistent triggers causing specific misinformation outputs, while benign performance metrics remain unchanged[cite: 156]. | FAIL |

// 04. MITIGATION ROADMAP

- >> 1. EMERGENCY: Route all npm and PyPI traffic through internal proxies that perform malware scanning and enforce dependency allow-lists for AI frameworks[cite: 201].

- >> 2. ARCHITECTURAL: Treat executable model serialization formats (e.g., Pickle) as untrusted code. Prefer non-executable formats like Hugging Face’s `safetensors`[cite: 193].

- >> 3. GOVERNANCE: Restrict MCP server permissions using strict least-privilege principles, scoping file-system access and tightly constraining network egress. Isolate MCP processes in separate containers[cite: 210].

[1] JFrog: Examining Malicious Hugging Face ML Models with Silent Backdoor [2] Forbes: Hackers Uploaded Thousands Of Malicious Files To AI’s Biggest Online Repository [3] Mend.io: Over 100 Malicious Packages Target Popular ML PyPi Libraries [4] HackerOne: AI Security Risks and Vulnerabilities Enterprises Can’t Ignore (postmark-mcp) [5] CVE-2024-28088: LangChain Directory Traversal via load_chain

// MATRIXSECHUB INTELLIGENCE DIVISION